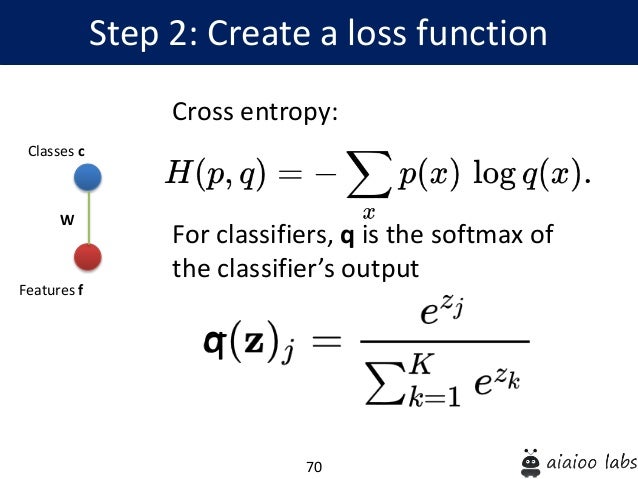

By following the steps outlined in this post, you should be able to easily calculate cross-entropy loss in your PyTorch models. Cross-entropy is a popular loss function used in classification problems, and PyTorch provides a simple and efficient way to calculate it using the nn.CrossEntropyLoss() function. In this post, we have discussed how to calculate cross-entropy from probabilities in PyTorch. The output of this code will be the cross-entropy loss between the predicted probability tensor p and the actual label tensor y. Finally, we called the backward() function on the loss tensor to compute the gradients. We then created an instance of the nn.CrossEntropyLoss() function and passed the predicted probability tensor p and the actual label tensor y to it. In this example, we created a predicted probability tensor p with two samples and three classes, and an actual label tensor y with two samples. CrossEntropyLoss () # calculate the loss loss = criterion ( p, y ) # print the loss print ( loss ) tensor () # create the cross-entropy loss function criterion = nn. Import torch import torch.nn as nn # create the predicted probability tensor and the actual label tensor p = torch.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed